|

This PWXEDM CICS/VSAM Change Capture report includes the following fields:. Init Date. The date, in mm/dd/yy format, on which the ECCR initialized. ID. The ECCR name. Time. The time, in hh:mm:ss format, at which the ECCR initialized.

File Name. The names of the VSAM files that participate in change data capture. Dataset Name. The fully-qualified data set names of the VSAM source data sets that participate in change data capture.

Type. The type of VSAM data set. Valid values are:. KSDS.

A key-sequenced data set. ESDS. An entry-sequenced data set. RRDS. Play archon. A relative record data set (RRDS) or variable-length relative record data set (VRRDS). Path.

An alias path to a VSAM data set. P/AX. A CICS alternate index (AIX) path to a VSAM data set. Warn/Error. A warning or error flag. Valid values are:. (During PLTPI).

Indicates the ECCR automatically initialized during the third stage of CICS initialization because you specified the EDMKOPER module name in the PLT initialization list. NoCapture. Indicates that the data set is not participating in change capture because theDSN= datasetname,NOCAPTURE orCAPTURE vsamdatasettype=OFF override option is specified in the EDMKOVRD DD statement in the CICS region startup JCL or in the data set to which the DD statement points.

Normalizer transformation is Active and Connected.♦ The Normalizer transformation is used in place of Source Qualifier transformations when you wish to read the data from the cobol source.♦ The Normalizer transformation is used to convert column-wise data to row-wise data and also generates an index for each converted row. This is similar to the transpose feature of MS Excel. You can use this feature if your source is a cobol or relational database table. Thus number of rows in the output generally increases and this makes it an active transformation.♦ Normalization is the process of organizing data. In database terms, this includes creating normalized tables and establishing relationships between those tables according to rules designed to both protect the data and make the database more flexible by eliminating redundancy and inconsistent dependencies.♦ The Normalizer transformation normalizes records from COBOL and relational sources, allowing you to organize the data according to your own needs.

A Normalizer transformation can appear anywhere in a pipeline when you normalize a relational source.♦ Use a Normalizer transformation instead of the Source Qualifier transformation when you normalize a COBOL source. When you drag a COBOL source into the Mapping Designer workspace, the Mapping Designer creates a Normalizer transformation with input and output ports for every column in the source.♦ You primarily use the Normalizer transformation with COBOL sources, which are often stored in a denormalized format. The OCCURS statement in a COBOL file nests multiple records of information in a single record.♦ Using the Normalizer transformation, you break out repeated data within a record into separate records. For each new record it creates, the Normalizer transformation generates a unique identifier.♦ You can use this key value to join the normalized records. You can also use the Normalizer transformation with relational sources to create multiple rows from a single row of data. Occurs: The number of instances of a column or group of columns in the source row. Generated Key:♦ The Designer generates a port for each REDEFINES clause to specify the generated key. You can use the generated key as a primary key column in the target table and to create a primary and foreign key relationship.

The naming convention for the Normalizer generated key is: GK.♦ The Designer adds one column (GKFILEONE) for each REDEFINES in the COBOL source. The Normalizer GK columns tell you the order of records in a REDEFINES clause. Example of Normalizer:Developers sometimes need to transform one source row into multiple target rows.

ExpatGlobal ModeratorJoined: 14 Mar 2007Location: Welsh WalesPosted: Wed Jul 23, 2008 2:49 pm Post subject:The PRINT command of what, IDCAMS, COBOL, SAS???Rajesh SampathNew UserJoined: 18 Jun 2008Location: PunePosted: Wed Jul 23, 2008 3:24 pm Post subject: Reply to: Opening a VSAM fileU mean Print statement.print ids(/) ch?ritesh pandeyNew UserJoined: 09 Feb 2007Location: PunePosted: Wed Jul 23, 2008 4:01 pm Post subject:Thanks Rajesh for quick reply, but i have already tried.

Tip 1: Ignore the SQ SQL Override conditionallyIt is possible by defining a mapping parameter for the WHERE clause of the SQL Override. When you need all records from the source, define this parameter as 1=1 in theparameter file and in case you need only selected data, set the parameter accordingly.Tip 2: Overcome size limit for a SQL Override in a PowerCenter mappingThe SQL editor for SQL query overrides has a limit of maximum of 32,767 characters.To overcome this we can do followingTo source a SQL of more than 32,767 characters do the following:1.

Vsam Files In Informatica Basicat

Create a database view using the SQL query override.2. Create a Source Definition based on this database view.3. Use this new Source Definition as the source in the mappingTip 3.:Export an entire Informatica folder to a xml fileWe can do this in 8.1.1,1) In designer Select Tools - Queries and create a newquery. Set the Parameter Name 'Folder' equal to the Folder you want to export and then run the query.2) In the Query Results window, choose Edit - Select All Then select Tools - Export to XML File andenter a file name and location. Full Folder willbe exported to an XML file.We can also use the query tool in Repository Manager, to geteverything in the folder (mappings, sessions, workflows, etc.)Tip 4: Validate all mappings in a folderWe can validate all mappings in a folder in following way:1. Go to the Repository manager client2. We have tried to come up with some of best practices in informatica1) Always try to add expression transformation after source qualifier and before Target. Ed sheeran kiss me.

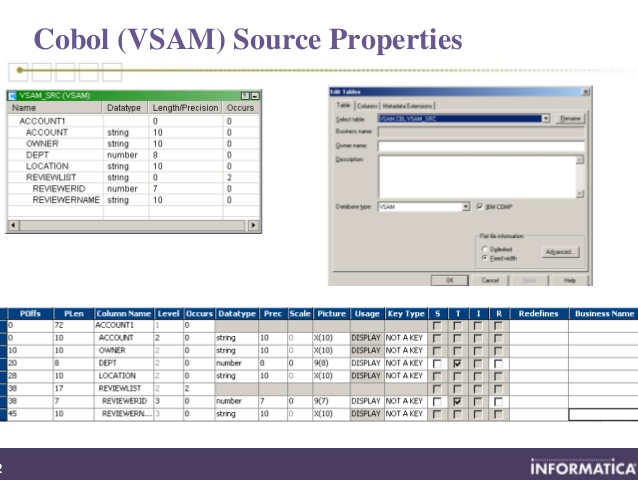

Normalizer transformation:Normalizer transformation is used with COBOL sources, which are often stored in a denormalized format. The OCCURS statement in a COBOL file nests multiple records of information in a single record. We can use Normalizer transformation, to break out repeated data within a record into separate records. For each new record it creates, the Normalizer transformation generates a unique identifier.Step 1: Create the Copybook for COBOL sourceFirst Step is to get the copybook from Mainframe Team and convert that Informatica Compliant formatIt will look likeNormally Highlighted section is provided by Mainframe team convert it into format required by format by adding line above that code (From identification to fd FNAME) and below that code (starting from working storage division). After changes save the file as.cbl filePoint to be taken care while editing.cbl FileYou might get following erroridentification division.program-id. Mead.environment division.select Error at line 6: parse errorThings to be taken care of1.Line Select FNAME should not start before column position 122.Other line which have been added above and below should not start before column 93.All the line in structure (Highlighted above) should end with Dot.Once Cobol Source is imported successfully you can drag Normalizer source into mappingStep 2: Set Workflow Properties Properly for VSAM SourceOne you have successfully imported the COBOL copybook then you can create your mapping using VSAM Source.

After creating mapping you can create your workflowPlease take care of following properties in session containing VSAM sourceIn Source Advance File properties set the following options (Highlighted one)Imp: Always ask for COBOL source file to be in Binary Format, Otherwise you will face lot of problems with COMP-3 FieldsOnce you have set these properties you can run your workflow.COMP3 FIELDS:COBOL Comp-3 is a binary field type that puts ('packs') two digits into each byte, using a notation called Binary Coded Decimal, or BCD. This halves the storage requirements compared to a character, or COBOL 'display', field.

Comp-3 is a common data type, even outside of COBOLCommon issues faced while working with Comp-3 Fields:If you have created your created cobol source definition with comp-3 fields (Packed Data) but actual data in source file is not packed.So Make sure that in both the definition and source file date is in same formatCheck whether COMP-3 fields are signed or unsigned. Enhancments in informatica 8.6 Version:. Target from Transformation:In Infa 8 we can create target from transformation by dragging transformation in Target designer. Pushdown optimization: Uses increased performance by pushing transformation logic to the database by analyzing the transformations and issuing SQL statements to sources and targets. Suppose we are having input data coming asFirstname1Ph1Address1Firstname2Ph2Address2Firstname3Ph3Address3You want data in output like i.e.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Blog

- Home

- Drivers For Audio 8 Dj Traktor

- Drivers Tested To Comply With Fcc Standards For Home Or Office Use

- Reinventing Hell Rar File

- Download Insanity Max Interval Circuit Free

- Dx9 Offline Installer

- Qdi Kinetiz 7e Manual Arts

- Gaogaigar Final Ost Download

- Cinedash Media Console Driver Windows 7 8930g

- R Kelly I Wish Remix Download Mp3

- Download Bleach 198 Subtitle Indonesia

- Bosch Jigsaw 1581 Vs Manual Muscle

- Nightcall London Grammar Remix Free Download

- Engravelab V9 Crack

- Download Film Architecture 101 720p

RSS Feed

RSS Feed